Tripura University Question Papers 2018: A Comprehensive Guide

Accessing Tripura University’s 2018 question papers in PDF format is crucial for effective exam preparation, offering valuable insights into

past assessments and syllabus coverage.

Tripura University question papers, particularly those from 2018, represent a vital resource for students preparing for examinations. These papers offer a tangible understanding of the university’s examination patterns, question difficulty levels, and frequently tested concepts. Accessing these resources, often available in PDF format through the official university website and various educational portals like sample-papers.com and Getmyuni, allows students to familiarize themselves with the expected format and scope of the assessments.

The availability of 2018 papers for courses like B.Ed., MA English, BSc, and DELED provides targeted practice material. Studying these past papers isn’t simply about memorizing answers; it’s about developing a strategic approach to tackling exams and identifying areas needing further study. They are invaluable tools for self-assessment and building confidence.

Importance of Previous Year Question Papers

Previous year question papers, specifically the 2018 PDFs from Tripura University, are paramount for successful exam preparation. They transcend mere sample materials, functioning as realistic simulations of the actual examination environment. Analyzing these papers allows students to understand the examiner’s perspective, identifying recurring themes and crucial topics within each syllabus.

Downloading and studying these PDFs – available for courses like B.Ed., MA English, BSc, and DELED – facilitates effective time management during the exam. Students learn to prioritize questions and allocate time efficiently. Furthermore, these papers pinpoint individual weaknesses, enabling focused revision. Resources like sample-papers.com and Getmyuni provide easy access, making strategic preparation significantly more achievable and boosting overall academic performance.

Availability of 2018 Question Papers in PDF Format

Tripura University’s 2018 question papers are readily available in PDF format through multiple channels, ensuring accessibility for all students. Websites like sample-papers.com specifically offer a comprehensive collection of these past papers, categorized by course and semester – including B.Ed. (Semesters 1-4), MA English, BSc, and DELED (1st & 2nd Year).

Getmyuni also provides downloadable PDF versions, emphasizing their value for practice and study. Furthermore, students can explore resources directly within Tripura University’s official website and relevant departmental repositories. This widespread availability, coupled with the convenience of the PDF format, allows students to easily download, save, and review these crucial materials, maximizing their preparation efficiency and exam success.

Specific Courses & Question Paper Access

Numerous courses from 2018, like B.Ed., MA English, BSc, and DELED, have readily accessible question papers available in convenient PDF downloads.

B.Ed. Question Papers (Semesters 1-4) ⎯ 2018

For B.Ed. students at Tripura University, accessing 2018 question papers for all four semesters (1-4) is now simplified through PDF downloads. These resources are invaluable for understanding the exam structure, frequently asked questions, and the overall difficulty level of past papers.

Students can efficiently prepare by reviewing these materials, identifying key topics, and practicing with authentic assessment examples. Websites like sample-papers.com offer a centralized location to download these PDFs, ensuring easy access to essential study aids. Utilizing these past papers will significantly enhance your preparation and boost confidence for upcoming examinations. Don’t miss this opportunity to excel in your B.Ed. program!

MA English Question Papers ⎻ 2018

Aspiring MA English students at Tripura University can now readily access and download question papers from 2018 in convenient PDF format. These past papers serve as a critical resource for understanding the exam pattern, the types of questions asked, and the expected depth of knowledge.

By studying these documents, students can effectively gauge their preparation level, identify areas needing improvement, and practice time management skills. Resources like sample-papers.com provide a platform for easy access to these valuable materials. Downloading and analyzing these 2018 papers is a strategic step towards achieving success in your MA English examinations at Tripura University, ensuring a focused and effective study approach.

BSC Question Papers ⎯ 2018

For BSc students at Tripura University preparing for their examinations, accessing the 2018 question papers in PDF format is an invaluable asset. These resources offer a realistic preview of the exam structure, question difficulty, and frequently covered topics within the BSc curriculum.

Students can utilize these past papers for self-assessment, identifying knowledge gaps, and honing their problem-solving abilities. Websites like sample-papers.com and Getmyuni are reported to host these documents, facilitating easy download and access. Thoroughly reviewing these 2018 BSc question papers will significantly enhance your exam preparation, leading to increased confidence and improved performance in your Tripura University assessments.

DELED Question Papers (1st & 2nd Year) ⎯ 2018

Tripura University’s DELED (Diploma in Elementary Education) students from both the 1st and 2nd year can greatly benefit from studying the 2018 question papers available in PDF format. These papers provide a clear understanding of the exam pattern, the types of questions asked, and the weightage given to different topics within the DELED syllabus.

Accessing these past papers allows students to practice time management, improve their answer-writing skills, and identify areas where they need further study. Information suggests resources like dedicated websites offer these PDFs for download. Utilizing these 2018 DELED question papers is a strategic step towards achieving success in your Tripura University examinations, ensuring comprehensive preparation and boosting confidence.

Where to Download Question Papers

Find Tripura University’s 2018 question papers on the official website, Getmyuni, and sample-papers.com, offering convenient PDF downloads for effective study.

Official Tripura University Website

The primary and most reliable source for Tripura University question papers, including those from 2018, is the official university website. While direct links to specific 2018 papers may require some navigation, the website serves as the central repository for all academic resources.

Students should begin their search within the relevant department or faculty section corresponding to their course of study (e.g., Education, English, Science). Look for sections labeled “Question Papers,” “Old Question Papers,” “Previous Year Papers,” or similar headings. The availability of papers in PDF format is frequently mentioned, allowing for easy download and offline access.

Be prepared to potentially browse through archived lists or use the website’s search function, utilizing keywords like “2018,” “question paper,” and the specific course code. Patience and a systematic approach are key to locating the desired materials on the official Tripura University website.

Third-Party Educational Websites (sample-papers.com, Getmyuni)

Alongside the official Tripura University website, several third-party educational websites offer access to previous year question papers, including those from 2018. Sample-papers.com explicitly states its provision of Tripura University sample, old, and previous year question papers in PDF format. Getmyuni also lists Tripura University model question papers available for download as study resources.

However, it’s crucial to exercise caution when utilizing these platforms. While convenient, the accuracy and completeness of the papers cannot always be guaranteed. Always cross-reference with the official university website whenever possible to ensure you are studying the correct and most up-to-date materials.

These websites can be valuable supplements, particularly for initial searching, but should not be considered the definitive source. Verify the paper’s authenticity and relevance before relying on it for exam preparation.

University Department Resources

Directly contacting Tripura University’s individual departments represents a reliable avenue for obtaining 2018 question papers. Departmental libraries and academic offices often maintain archives of past exam materials for student reference. This approach ensures authenticity and accuracy, circumventing potential issues with third-party sources.

Furthermore, senior students or alumni within specific courses may possess collections of previous question papers. Networking with these individuals can provide access to valuable resources not readily available online. Faculty members are also excellent points of contact, potentially offering insights into past exam patterns and access to relevant materials.

While requiring more direct effort, utilizing university department resources guarantees the papers’ legitimacy and relevance to the current syllabus, making it a highly recommended strategy.

Understanding Question Paper Patterns

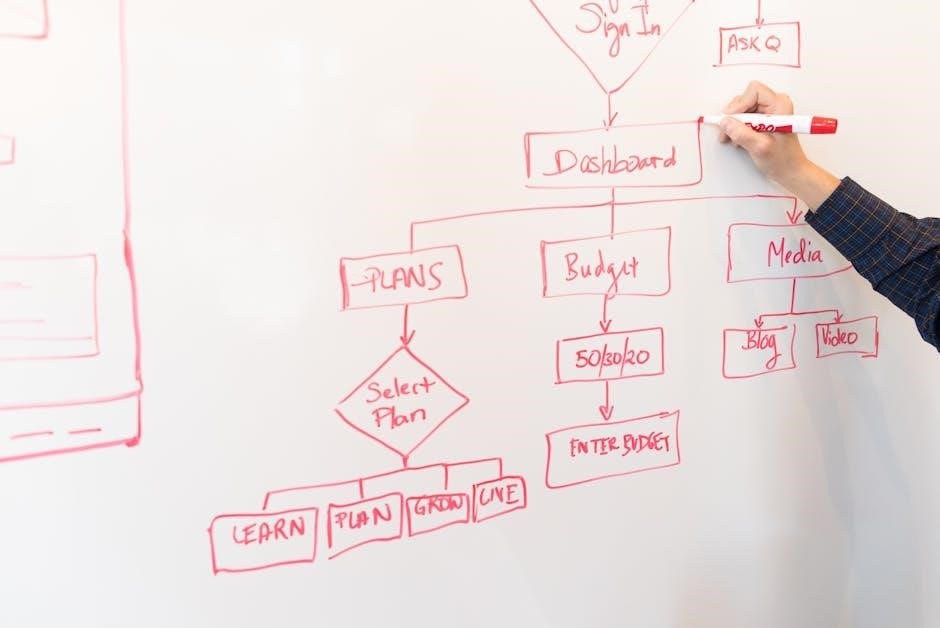

Analyzing 2018 papers reveals recurring question types and crucial topics, aiding focused preparation and effective time management during examinations.

Analyzing Question Types

Detailed examination of Tripura University’s 2018 question papers, available in PDF format, reveals a diverse range of assessment methods. Students can expect a mix of long-answer questions designed to test in-depth understanding, alongside short-answer questions focusing on core concepts. Multiple-choice questions frequently appear, evaluating foundational knowledge and quick recall.

Furthermore, pattern recognition shows a tendency towards application-based questions, requiring students to demonstrate practical problem-solving skills. Identifying these prevalent question types – analytical, descriptive, and objective – allows for targeted preparation. By understanding the weighting given to each type, students can prioritize their study efforts effectively, maximizing their potential for success in upcoming examinations. Consistent practice with past papers is key to mastering these formats.

Identifying Important Topics

Scrutinizing Tripura University’s 2018 question papers in PDF format highlights recurring themes and crucial subject areas. Consistent appearances of specific topics across multiple papers indicate their significance in the syllabus. For example, analysis reveals a strong emphasis on core concepts within each discipline, frequently tested through both descriptive and analytical questions.

Students should prioritize topics appearing repeatedly in previous assessments. These often represent fundamental principles and areas where professors expect a thorough understanding. Furthermore, identifying topics with higher weightage – indicated by the number of questions or allocated marks – is vital for focused preparation. Utilizing these past papers as a guide allows students to concentrate their efforts on the most relevant and frequently examined areas, improving their overall performance.

Exam Weightage & Syllabus Coverage

Analyzing Tripura University’s 2018 question papers in PDF format reveals a clear pattern regarding exam weightage and syllabus coverage. A detailed review demonstrates that certain units or modules consistently receive greater emphasis than others, influencing the overall score potential. The papers effectively showcase the breadth of the syllabus, confirming which areas are considered essential by the examiners.

Students can determine the relative importance of each topic by observing the frequency and mark allocation in past papers. This allows for a strategic allocation of study time, focusing on high-weightage areas to maximize their chances of success. Furthermore, comparing the questions to the official syllabus ensures comprehensive coverage, preventing any overlooked topics and fostering a well-rounded understanding of the course material.

Utilizing Question Papers for Exam Preparation

Leveraging 2018 Tripura University question papers (PDFs) enables self-assessment, practice, and identification of weak areas, ultimately boosting exam confidence and performance.

Self-Assessment & Practice

Utilizing Tripura University’s 2018 question papers, readily available in PDF format, provides an invaluable opportunity for rigorous self-assessment. Students can simulate exam conditions, evaluating their understanding of core concepts and identifying areas needing further study.

Consistent practice with these past papers is paramount. By working through previous questions, students become familiar with the exam pattern, question types, and the level of difficulty expected. This proactive approach builds confidence and reduces exam-related anxiety.

Furthermore, self-assessment allows for personalized learning. Analyzing performance on these papers highlights specific weaknesses, enabling students to focus their efforts on targeted revision and improvement. Resources like sample-papers.com and Getmyuni offer convenient access to these essential study materials.

Time Management Strategies

Effectively utilizing Tripura University’s 2018 question papers in PDF format is key to mastering time management for exams. Students should practice solving papers under strict time constraints, mirroring actual exam conditions. This builds crucial speed and efficiency.

Analyzing past papers reveals the typical time required for different question types. This allows for strategic allocation of time during the exam, preventing overspending on challenging questions and ensuring all sections are addressed.

Regular practice with these resources, found on platforms like sample-papers.com and Getmyuni, helps refine pacing. Students learn to prioritize questions, quickly identify solvable problems, and manage anxiety – all vital components of successful time management during high-stakes examinations.

Identifying Weak Areas

Analyzing Tripura University’s 2018 question papers in PDF format is a powerful method for pinpointing academic weaknesses. By attempting these past papers, students can objectively assess their understanding of specific topics and concepts.

Consistent errors or difficulties with certain question types highlight areas needing focused revision. This targeted approach, utilizing resources from websites like sample-papers.com and Getmyuni, is far more efficient than generalized studying.

Furthermore, comparing self-attempted answers with official solutions reveals gaps in knowledge and understanding. This self-assessment, facilitated by readily available PDFs, allows students to create a personalized study plan, concentrating on areas where improvement is most needed for exam success.